You can have a perfectly written article, a well-designed page, and strong backlinks pointing to it and still have zero organic traffic. If search engines cannot crawl or index your page, it simply does not exist in search results. Not buried on page ten. Completely absent.

This guide explains what crawlability and indexing mean, how each works, why they are different problems requiring different fixes, and what you can do to ensure every important page on your site is discoverable, indexed, and eligible to rank.

Crawlability and indexing, what each one means

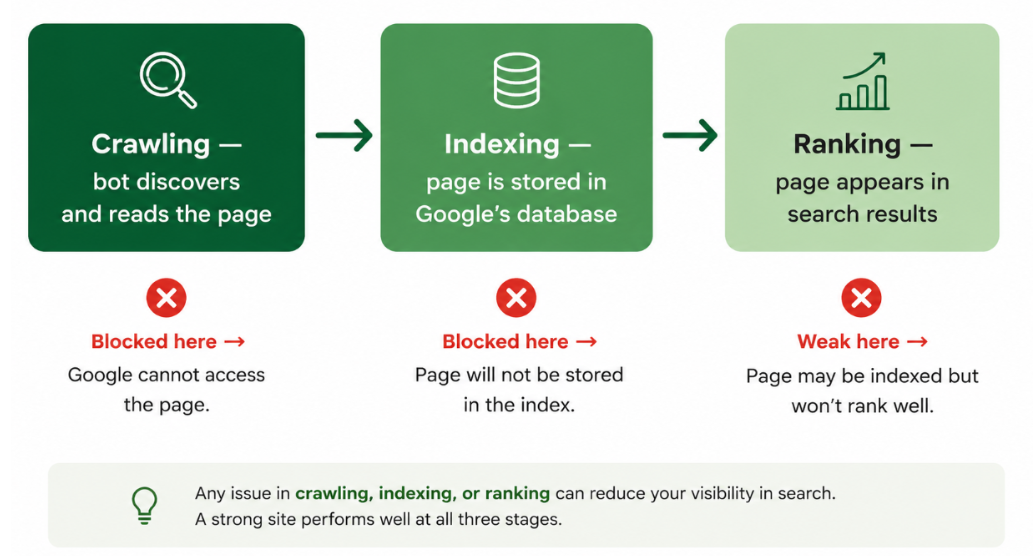

Crawlability and indexing are two separate stages of how a search engine processes your site. They are often mentioned together because they both need to work correctly for a page to rank, but a failure in one does not always mean a failure in the other, and the fixes are completely different.

Stage | What happens | What controls it | Result if it fails |

Crawling | A search engine bot visits your page by following links or a sitemap, reads the HTML, and discovers content and further links | robots.txt, internal links, site architecture, page speed, server response | Page is never discovered or read — cannot be indexed or ranked |

Indexing | The crawled content is evaluated, stored in the search engine's database, and made eligible to appear in results | Meta robots tags, canonical tags, content quality, duplicate content signals | Page is discovered but not stored — cannot rank even if crawled perfectly |

Ranking | Indexed pages are ranked for relevant queries based on relevance, authority, and user signals | Content quality, backlinks, on-page SEO, Core Web Vitals | Page exists in the index but does not appear prominently in results |

The key distinction: crawlability is about access. Indexing is about acceptance. A bot can crawl a page and then choose not to index it if the content seems thin, duplicated, or explicitly marked as non-indexable. And a page can be technically crawlable, but never actually discovered if internal linking is weak and it is not in the sitemap. Both stages must work correctly before any ranking can happen.

How crawling works

Search engines use automated programs called crawlers, spiders, or bots to discover content on the web. Googlebot is Google's primary crawler. It starts from known URLs, your sitemap, previously crawled pages, and external links pointing to your site and follows every link it finds, reading page content and discovering new URLs as it goes.

Crawl budget and why it matters

Every website is allocated a crawl budget: the number of pages a search engine will crawl within a given time period. For small sites, this rarely causes problems. For large sites with thousands of pages, crawl budget becomes a genuine strategic concern. If crawlers waste their budget on low-value pages with thin content, duplicate parameter URLs, paginated pages with no unique value, or session IDs, important pages may be crawled less frequently or not at all.

Google has stated that crawl budget is determined by two factors: crawl capacity (how fast it can crawl without overloading your server) and crawl demand (how popular and fresh your pages appear to be). A site that loads slowly, returns errors frequently, or has thousands of duplicate URL variants will have a lower effective crawl budget than a fast, clean site with well-managed architecture. The site architecture guide covers how to structure your site so crawlers reach important pages efficiently.

What blocks crawlers from accessing your site?

Crawl barriers generally fall into two categories: intentional restrictions and accidental technical issues. Some blocks are placed deliberately to keep pages out of search results, while others silently prevent search engines from accessing valuable content.

Intentional crawl restrictions

These are settings added on purpose to control what search engines can access or index, including:

robots.txt disallow rules

noindex tags or directives

password-protected or gated pages

restricted staging or development environments

These controls are useful when applied correctly, but mistakes can unintentionally hide important pages from search engines.

Accidental crawl blockers

Accidental crawl issues are often more damaging because they can go unnoticed for weeks. Common examples include:

JavaScript-rendered content that appears blank in raw HTML

orphan pages with no internal links pointing to them

Redirect chains that waste crawl budget

broken internal linking structures

server instability or slow-loading pages

overly aggressive bot-blocking rules targeting AI scrapers

In severe cases, a single incorrect robots.txt rule can prevent Googlebot from accessing large sections of a website, leading to traffic loss and deindexing issues before the problem is discovered.

Crawlability in 2026, AI bots and robots.txt complexity

Robots.txt has become significantly more complex in 2026. Sites now need to manage access not just for Googlebot and Bingbot but for a growing fleet of AI crawlers. GPTBot blocking in robots.txt files increased by 55% year-over-year between 2024 and 2025 as publishers responded to concerns about AI training data harvesting. The problem is that many of these blocks inadvertently block AI search and retrieval bots, the ones that determine whether your content appears in ChatGPT, Perplexity, and Claude responses.

Each AI platform operates separate crawlers for training versus retrieval. Anthropic runs ClaudeBot (training), Claude-User (real-time retrieval), and Claude-SearchBot (search indexing). OpenAI runs GPTBot (training) and ChatGPT-User (retrieval). Blocking all bots from a single platform blocks both functions, eliminating your content from AI citation pools even if the intention was only to restrict model training. Managing these correctly requires evaluating each user agent individually in your robots.txt configuration.

How indexing works

After a page is crawled, Google decides whether to add it to its index the massive database it queries every time someone runs a search. This evaluation is not automatic. Google assesses the page's content quality, checks for duplicate or near-duplicate content elsewhere, evaluates whether canonical signals point here or elsewhere, and applies quality signals from the broader site.

What controls whether a page gets indexed

The most direct control you have over indexing is the meta robots tag in the page's HTML head. A page marked "noindex" will be crawled but not stored in the index. A page marked "index" (or with no robots directive at all, since index is the default) is eligible for indexing, though Google still reserves the right to decline if content quality is poor.

Canonical tags are the second key indexing signal. A canonical tag on page A pointing to page B tells Google that B is the preferred version, and it will index B rather than A, regardless of which one it crawled first. Canonical tags do not block crawling; they redirect indexing attention. This is how e-commerce sites handle product variants, filtered category pages, and paginated content without creating duplicate index entries. The full implementation process is covered in the canonicalization guide.

When Google chooses not to index a page

Even a page with a noindex tag and a correct canonical can be excluded from the index if Google determines it is not worth indexing. This happens most often with thin content pages that offer minimal unique value, near-duplicate pages that closely mirror other indexed pages, pages with no inbound links that Google interprets as low-importance, and pages that consistently load slowly or return intermittent errors during crawling.

Google Search Console's Coverage report is where you see this in practice. Pages can appear as "Crawled, currently not indexed", meaning Google visited the page and chose not to add it. This is different from "Discovered currently not indexed" (Google knows about it but has not crawled it yet) and "Excluded by noindex tag" (explicitly blocked). Each status points to a different fix.

Search Console status | What it means | Most likely fix |

Crawled is currently not indexed | Google visited the page but decided not to index it | Improve content quality, remove duplication, and add internal links to signal importance |

Discovered, currently not indexed | Google knows the page exists, but has not crawled it yet | Improve crawl budget efficiency, add the URL to the sitemap, and build internal links from important pages |

Excluded by 'noindex' tag | Page has a noindex directive, intentional or accidental | Check whether noindex is intended; remove if the page should rank |

Duplicate Google chose a different canonical | Google selected a different URL as the canonical version | Review and correct canonical tags; ensure internal links point to the preferred URL |

Page with redirect | URL redirects to another page, and the redirect target is indexed instead | Update internal links to point directly to the final destination URL |

Soft 404 | Page returns a 200 status, but Google considers the content empty or near-empty | Add substantial unique content or implement a proper 404/410 response |

How crawlability and indexing directly affect SEO visibility

The connection between crawl and index health and organic performance is direct and often underestimated. Every page that fails to get crawled is a page that cannot rank. Every page that gets crawled but not indexed is a wasted content investment. And every page that gets indexed with poor signals is competing at a disadvantage it did not need to have.

New content takes longer to rank when crawling is inefficient

If Google crawls your site infrequently because your crawl budget is being wasted on low-value pages or because your site is slow, new content may take weeks or months to be discovered and indexed. Sites with clean architecture, fast load times, and well-maintained sitemaps typically see new content indexed within hours to days. The gap compounds over time, a slow-to-index site consistently trails competitors who publish and rank the same content faster.

Ranking signals are split across duplicate URLs

Without proper canonicalization, the same content appearing at multiple URLs splits the link equity and ranking signals that should consolidate on one page. A product page accessible at both example.com/product and example.com/product?color=blue dilutes the authority that should flow to the canonical version. This is not a theoretical concern 95.2% of sites have 3XX redirect issues, and 88% have HTTP-to-HTTPS redirect problems that create exactly this kind of unintended duplication at scale.

AI visibility depends on crawl access, not just Google

In 2026, crawlability affects more than Google rankings. AI platforms retrieve content through their own crawlers, and a site that is crawlable to Googlebot but blocks AI retrieval bots is invisible to ChatGPT, Perplexity, and Claude when those systems try to cite sources in real time. This means crawl configuration now has a direct impact on LLM visibility and AI citation frequency, a dimension that barely existed two years ago but is now central to how content gets discovered by an increasingly AI-assisted audience.

How to improve crawlability

Build a clean internal linking structure

Internal links are the primary navigation path for crawlers. A page with no internal links pointing to it an orphan page may never be discovered by a crawler even if it is in the sitemap, because crawlers heavily weight link-based discovery over sitemap declaration. Every important page should have at least one internal link from a page that is itself well-linked and regularly crawled. Internal linking from high-authority hub pages to new or updated content is one of the fastest ways to accelerate crawl discovery for those pages.

Maintain a clean, accurate XML sitemap

Your XML sitemap is a direct communication channel to search engines, listing your important pages. It should contain only canonical, indexable URLs not pages blocked by robots.txt, not redirected URLs, not noindex pages. Submitting a sitemap with non-indexable pages in it does not cause those pages to get indexed, but it does confuse the crawl signal and waste the attention Google pays to your sitemap entries. Submit your sitemap through Google Search Console and Bing Webmaster Tools, and update it automatically whenever pages are added or removed. The full setup process is in the XML sitemap guide.

Audit and fix your robots.txt

Review your robots.txt file carefully especially after any CMS migration, platform change, or addition of new disallow rules aimed at AI crawlers. Test it using Google Search Console's robots.txt tester to confirm that Googlebot, Bingbot, and your chosen AI retrieval bots are not inadvertently blocked. The single most damaging technical SEO error is a robots.txt disallow rule that prevents the entire site from being crawled, and it is more common than most people expect.

Eliminate low-value pages that waste crawl budget

For large sites, actively managing what gets crawled is as important as ensuring important pages are accessible. Add noindex tags or robots.txt disallow rules to pages that provide no ranking value: internal search result pages, filtered and sorted product category URLs with no unique content, thin tag archive pages, user profile pages, and admin or login URLs. This concentrates crawl budget on the pages that actually need to be indexed and ranked.

How to improve indexability

Audit index coverage in Search Console regularly

Google Search Console's Coverage report is the most accurate available picture of your indexing health. Review it monthly, not just when traffic drops. Look for trends: is the number of indexed pages growing, stable, or declining? Are there "Crawled currently not indexed" or "Discovered currently not indexed" pages that should be indexed? Any page that should be ranking but is not appearing in search results should be checked here first.

Fix thin and duplicate content that prevents indexing

If pages are consistently appearing as "Crawled currently not indexed," the most common cause is content that Google considers too thin or too similar to other indexed pages. The fix is either to substantially improve the content on those pages or to consolidate multiple thin pages into one comprehensive page and redirect the others. Adding unique value original data, specific examples, additional depth is more effective than simply adding word count.

Implement and verify canonical tags correctly

Every page with potential duplicate URL variants should have a canonical tag specifying the preferred version. This includes pages accessible at both HTTP and HTTPS, with and without www, with and without trailing slashes, and with URL parameter variations. Canonical tags should be self-referencing on unique pages (a page pointing to itself as its own canonical) and cross-referencing on duplicates. A common mistake is setting the canonical on a page to point to a different URL but keeping internal links pointing to the non-canonical version, which sends conflicting signals to Google. All internal links should point to the canonical URL directly.

Common crawl and index mistakes

Mistake | What goes wrong | Fix |

Blocking crawlers in robots.txt accidentally | Critical pages or entire directories become invisible to search engines and AI bots | Test robots.txt in Search Console after every change; review after any platform migration |

Noindex on important pages | Pages are crawled, but removed from the index, and rankings disappear | Audit for noindex tags on key pages using a crawl tool; remove or correct incorrect directives |

Missing or broken XML sitemap | New and updated content is discovered slowly or not at all | Generate a clean sitemap of canonical, indexable URLs and submit to Search Console |

Orphan pages with no internal links | Crawlers have no path to reach the page; it is never crawled | Build internal links from relevant hub pages to all important content |

Canonical tags pointing to the wrong URL | Link equity and indexing attention are split across multiple URLs | Audit canonical tags with a crawl tool; ensure all canonicals point to the intended preferred URL |

JavaScript-only content | Crawlers see empty HTML content is invisible until JavaScript executes, which AI crawlers never do | Server-side render all critical content: body text, headings, internal links, structured data |

Low-value pages are consuming crawl budget | Important pages are crawled infrequently because the budget is spent on thin or duplicate pages | Noindex or block low-value pages; use Search Console crawl stats to monitor budget allocation |

Conclusion

Crawlability and indexing are the prerequisites for everything else in SEO. No amount of content quality, link building, or on-page optimization produces results on a page that search engines cannot access or have chosen not to store. They are also the problems most likely to go unnoticed; a misconfigured robots.txt or an accidental noindex tag sits invisibly in the background while teams spend months wondering why a well-optimized page is not ranking.

The practical approach is straightforward: audit crawl access and indexing status regularly using Google Search Console, maintain a clean XML sitemap, build internal links that give crawlers a path to every important page, and review robots.txt any time a change is made to the site's configuration. In 2026, that audit should also include AI crawler access, verifying that retrieval bots for ChatGPT, Perplexity, and Claude can reach your content, which is now as important as confirming Googlebot can. The site audit guide covers how to structure that full technical review, and the broader technical SEO guide explains where crawlability and indexing sit within the complete technical SEO picture.