You can write excellent content, earn strong backlinks, and still rank on page three. Technical SEO is usually why. It is the infrastructure layer of your website that determines whether search engines can find your pages, understand what they mean, and deliver them fast enough for users to stay.

This guide explains what technical SEO is, how it differs from other types of SEO, its core components, and what you need to do about each.

What technical SEO means

Technical SEO is the process of optimizing your website's infrastructure so that search engines can efficiently crawl, index, and understand its content. It has nothing to do with what you write and everything to do with how your site is built, how fast it loads, how its pages connect, and how clearly it signals its structure to crawlers.

The simplest way to think about it: technical SEO ensures nothing gets in the way of search engines doing their job. Content and backlinks determine how well a page ranks once it is found. Technical SEO determines whether it gets found at all.

In 2026, technical SEO has expanded beyond its original scope. It now includes managing access for AI crawlers alongside traditional search bots, optimizing for Core Web Vitals as an active ranking signal, and ensuring JavaScript-heavy pages are accessible to systems that do not execute JavaScript at all. The fundamentals are stable. The scope keeps growing.

How technical SEO differs from on-page and off-page SEO

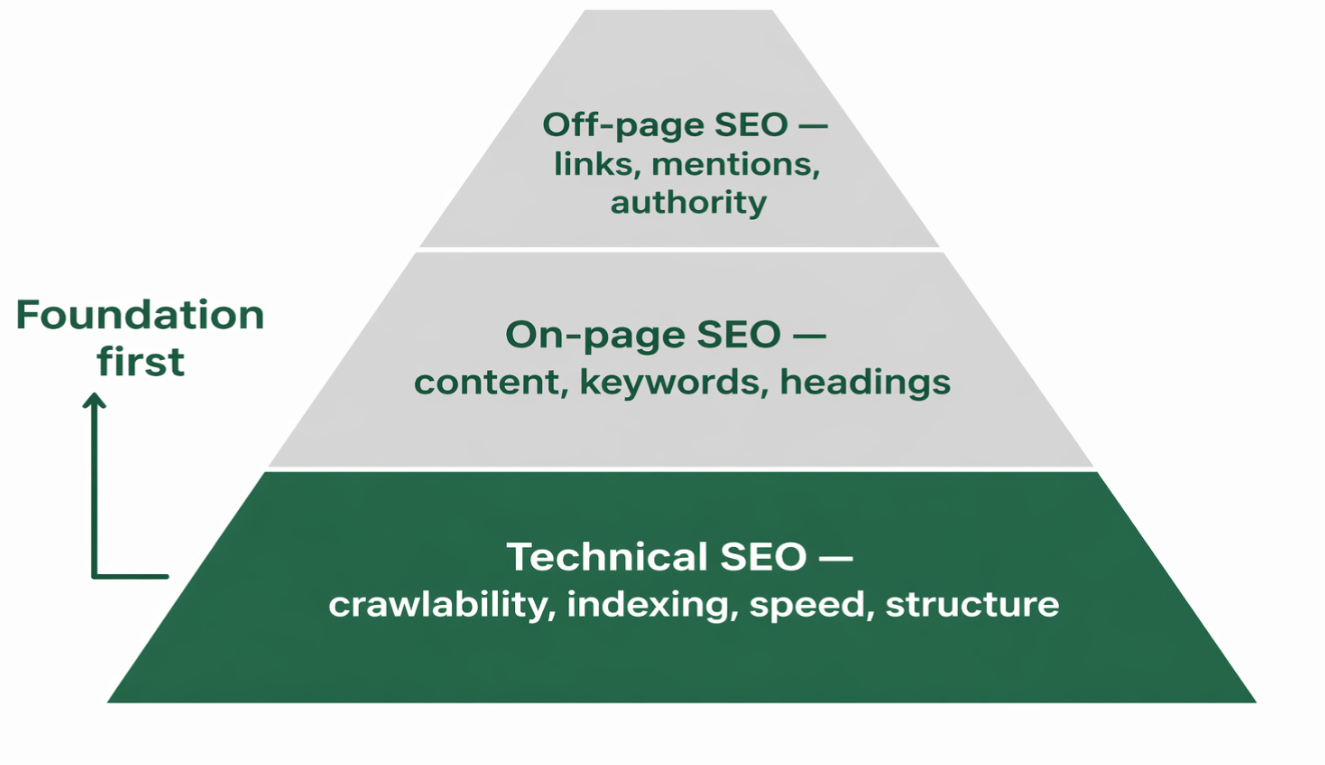

SEO splits into three layers. Understanding where each one starts and stops prevents the common mistake of applying the wrong fix to the wrong problem.

Type | What it covers | Core question |

Technical SEO | Crawlability, indexing, page speed, mobile optimization, HTTPS, site architecture, structured data, canonicalization, redirects | Can search engines access, understand, and process my site? |

Content quality, keyword usage, headings, meta tags, internal linking, image optimization | Does my content satisfy what the user is searching for? | |

Backlinks, brand mentions, digital PR, social signals | Does the broader web signal that my site is authoritative? |

Technical SEO is the foundation that the other two layers build on. A site with strong content and good backlinks but poor technical SEO will consistently underperform because search engines cannot fully process what is there. Fixing technical issues usually produces faster ranking improvements than content changes alone, because it unlocks value that already exists on the site.

The core components of technical SEO

Technical SEO is built on a few core elements that ensure search engines can properly access and understand your site. These components directly impact how well your pages get discovered and ranked.

Crawlability and robots.txt

Before a page can rank, a search engine must be able to reach it. Crawlability is controlled by your robots.txt file, which tells bots which pages to access and which to skip, and by your internal link structure, which is how bots navigate between pages.

A misconfigured robots.txt is one of the most costly and least visible technical SEO mistakes. In 2026, it has also become more complex: AI platforms OpenAI, Anthropic, Perplexity each operate multiple crawlers with different functions. Training bots collect data for model development. Retrieval and search bots find content to cite in real-time AI responses. Blocking all AI bots with a blanket rule to prevent training scraping often accidentally blocks the retrieval bots that determine whether your content appears in ChatGPT or Perplexity responses. Each bot needs to be evaluated and configured separately. You can manage this properly through a well-structured robots.txt setup.

XML sitemaps

An XML sitemap lists all your important URLs so search engines can discover them efficiently, even if internal linking does not surface every page. A clean sitemap includes only canonical, indexable URLs. It excludes redirected pages, noindexed pages, and pages blocked by robots.txt. Submitting it through Google Search Console and Bing Webmaster Tools speeds up discovery for new content and helps identify indexing issues early. For a step-by-step setup, the XML sitemap guide covers the full process.

Indexing and index control

Crawling and indexing are separate. A bot can crawl a page without indexing it. Indexing means the page enters the search engine's database and becomes eligible to appear in results. You control this with meta robots tags, specifically noindex on pages you do not want to surface in search. Common candidates include thin content pages, filtered URLs, thank-you pages, and staging environments.

Page speed and core web vitals

Page speed is both a ranking signal and a user experience signal. Google measures it through Core Web Vitals: three metrics that assess real-world loading performance, responsiveness, and visual stability.

Metric | What it measures | Good threshold |

LCP (Largest Contentful Paint) | How fast the main content loads | Under 2.5 seconds |

INP (Interaction to Next Paint) | How quickly the page responds to interactions | Under 200 milliseconds |

CLS (Cumulative Layout Shift) | Visual stability as page elements settle | Below 0.1 |

A two-second delay in load time increases bounce rate by over 100%. Sites that move from failing to passing Core Web Vitals consistently see improvements in engagement and conversion rates. 53% of users abandon a site if it takes more than three seconds to load.

Mobile optimization

Google operates on mobile-first indexing it uses the mobile version of your page as the primary basis for ranking. With mobile devices accounting for over 60% of global web traffic, a page that performs well on desktop but breaks or loads slowly on mobile is treated by Google as a poorly optimized page. Responsive design, appropriately sized tap targets, no intrusive interstitials, and fast mobile load times are all standard requirements, not optional improvements.

HTTPS and site security

HTTPS has been a confirmed Google ranking signal since 2014 and is now baseline infrastructure. It encrypts data between the browser and server, protects users, and is expected by every major search engine and browser. 84% of consumers abandon a site that shows security warnings. HTTPS adoption sits at over 91% across the web, so it is no longer a differentiator, but any site still on HTTP is at an active disadvantage. The full setup and migration process is covered in the HTTPS and SSL SEO guide.

Canonicalization

Canonicalization tells search engines which version of a URL is the preferred original when multiple versions of the same content are accessible. Without canonical tags, link equity splits across duplicate URLs and dilutes the authority that should consolidate on one page. Common sources of duplicates include HTTP vs HTTPS, www vs non-www, trailing slashes, and e-commerce filter or sort parameters. The canonicalization guide covers implementation and the edge cases that cause problems most often.

Structured data

Structured data is code you add to a page to explicitly tell search engines what the content means, rather than leaving them to infer it. The most widely used format is JSON-LD using Schema.org vocabulary. It labels content types: articles, FAQs, how-to guides, products, reviews, organizations and enables rich results in search: review stars, FAQ dropdowns, how-to steps, and event details that increase visibility and click-through rates. 72% of first-page Google results use schema markup. It also supports AI citation selection, and structured data reduces ambiguity about what a page contains, which helps LLMs extract and cite your content accurately. The structured data for LLMs guide explains the AI-specific dimension in detail.

Site architecture and internal linking

Site architecture is how your pages are organized and how they link to each other. A logical, shallow structure where any page is reachable within three to four clicks from the homepage ensures crawlers can navigate efficiently and that link equity flows through the site rather than getting trapped in deep, poorly linked sections.

Internal links are also how search engines understand topical relationships. A cluster of supporting pages linked around a central hub signals topical authority.

JavaScript SEO

JavaScript-heavy sites present a persistent technical SEO challenge. Googlebot can render JavaScript, but does so in a second crawl wave that may delay indexing by days or weeks. Every major AI crawler currently does not execute JavaScript at all, meaning content rendered client-side is completely invisible to them, regardless of how well the page performs for human visitors.

For critical content body text, headings, internal links, and structured data, server-side rendering or static HTML is strongly preferred. The JavaScript SEO guide covers how to audit your rendering setup and what to do when important content is only visible after JavaScript executes.

Redirects and 404 management

A 301 redirect signals a permanent URL move and transfers the majority of link equity to the destination. A 302 signals temporary and does not transfer equity. Using 302s where 301s are intended is a common mistake that slowly bleeds authority from pages that have earned links. Redirect chains where A redirects to B, which redirects to C, instead of going directly to C add crawl delay and lose a small amount of equity at each hop.

404 errors waste crawl budget and degrade user experience. For AI visibility, they carry an additional risk: AI platforms frequently invent plausible-looking URLs for pages they expect to exist, then send referral traffic to pages that do not. Monitoring your GA4 referral traffic from AI platforms against your 404 log monthly is now standard practice.

How to run a technical SEO audit

A technical audit moves systematically from access and discovery through to performance and structured data. These are the steps in order:

Crawl the site. Use Screaming Frog, Ahrefs Site Audit, or Semrush Site Audit to crawl your site the way a search engine does. This surfaces broken links, redirect chains, missing meta tags, duplicate content, and crawl depth issues across every page.

Check Search Console. The Coverage report shows which pages are indexed and why others are excluded. The URL Inspection tool shows exactly how Google sees any individual page crawled, rendered, and indexed. This is the most authoritative available signal about your real index status.

Review robots.txt and sitemap. Confirm robots.txt is not accidentally blocking important pages or crawlers. Verify the sitemap contains only canonical, indexable URLs and is submitted in Search Console and Bing Webmaster Tools.

Measure Core Web Vitals. Use Google PageSpeed Insights for page-level diagnostics and the Core Web Vitals report in Search Console for site-wide field data. Prioritize pages with the most organic traffic and conversion pages first.

Audit canonicals and redirects. Identify redirect chains, incorrect canonical tags, and pages that link internally to redirected URLs rather than directly to the final destination.

Validate structured data. Use Google's Rich Results Test to confirm structured data is correctly implemented and eligible for rich results. Check for missing schema on FAQ pages, how-to content, and articles.

Test JavaScript rendering. In Search Console's URL Inspection tool, compare the crawled version of a page against the rendered version. Any content visible in the rendered version but absent from the crawled HTML is invisible to search engines and AI crawlers.

Common technical SEO mistakes

Mistake | What goes wrong | Fix |

Blocking pages in robots.txt accidentally | Crawlers cannot access content that needs to rank | Audit robots.txt after every migration or CMS change |

Duplicate content without canonicals | Link equity splits across multiple URL versions | Add rel=canonical to all duplicate and near-duplicate pages |

JavaScript-rendered critical content | Body text is invisible to search engines and all AI crawlers | Use server-side rendering for headings, body content, and internal links |

Slow page speed failing Core Web Vitals | Higher bounce rates and a weakened page experience ranking signal | Compress images, defer non-critical scripts, and use a CDN |

Redirect chains | Equity lost at each hop; crawl efficiency reduced | Update all chains to redirect directly to the final destination |

No structured data on key page types | Ineligible for rich results; less clarity for AI citation selection | Implement JSON-LD schema for FAQ, Article, HowTo, and Organization pages |

Conclusion

Technical SEO is not a project you complete once. It is the ongoing maintenance of the infrastructure that every other SEO investment depends on. Content and backlinks determine how pages compete once they are found. Technical SEO determines whether they are found, indexed, and served quickly enough to rank.

The fundamentals have been stable for years: crawlability, indexing, page speed, mobile optimization, HTTPS, canonicalization, structured data, and clean architecture. What has changed in 2026 is the expanded scope AI crawler management and JavaScript rendering compatibility are now standard requirements alongside the traditional baseline.

The sites that get technical SEO right do not just avoid technical penalties. They protect their existing rankings through algorithm updates, index new content faster, earn rich results that improve CTR, and remain accessible to every system from Googlebot to Claude-SearchBot that determines who gets found and who does not. That kind of foundation is what everything else builds on. The site audit guide is the right place to start if you want to identify and prioritize the technical issues on your site right now.