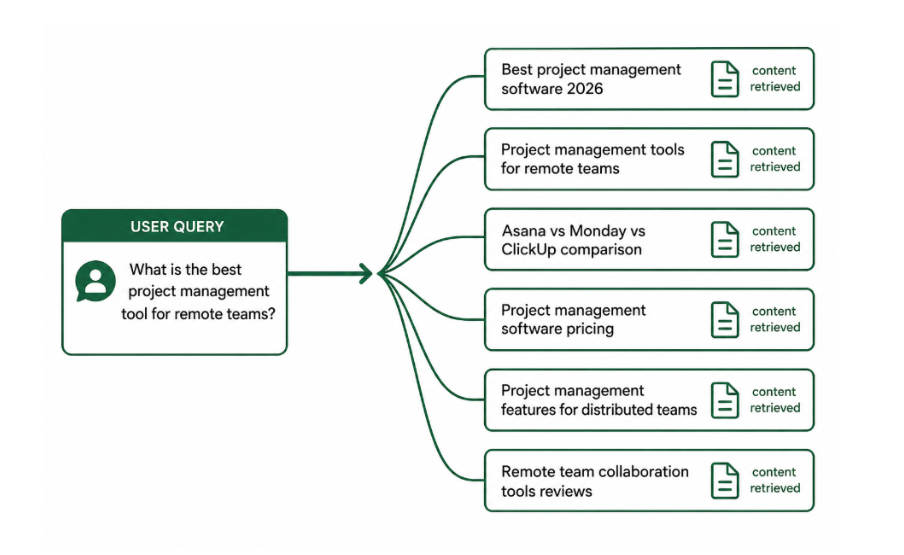

When you type a question into ChatGPT, Perplexity, or Google AI Mode, the AI does not search for your exact phrase and retrieve the top result. It does something far more complex — and far more consequential for how your content gets discovered. It breaks your question apart into a series of smaller, parallel sub-queries, retrieves content that answers each one separately, then synthesizes all of that into a single coherent response.

This guide explains what query fan-out is, how it works across different AI platforms, why it fundamentally changes SEO strategy, and exactly how to optimize for it.

What does query fan-out mean

Query fan-out is the process by which AI search systems decompose a single user query into multiple parallel sub-queries to retrieve diverse, comprehensive information before generating an answer. The term was formally introduced by Google's Head of Search Elizabeth Reid, at Google I/O 2025 when describing how AI Mode works: the system recognizes when a question needs advanced reasoning, calls on Gemini to break it into subtopics, and issues multiple queries simultaneously on the user's behalf.

The concept is not unique to Google. ChatGPT Search, Perplexity, Claude, and Gemini all use similar decomposition processes when handling queries that require information from multiple sources or cover multiple angles. The specific implementation varies by platform, but the core mechanism is consistent: one user query becomes many retrieval events, and the content that gets cited is the content that answers the sub-queries — not necessarily the content that ranks for the original phrase.

How query fan-out works step by step

Understanding the mechanics helps you build content that captures multiple sub-query slots rather than optimizing for only one keyword. The process follows a consistent sequence across AI platforms:

Step 1 — Intent decomposition: The AI analyzes the user's query and identifies the different subtopics, angles, and information needs embedded within it. A question like "what is the best CRM for small businesses" contains subtopics around pricing, integrations, ease of use, user reviews, scalability, and industry-specific use cases all of which may be searched separately.

Step 2 — Parallel sub-query generation: The system generates multiple sub-queries targeting different facets of the original question. Google AI Mode's Deep Search can issue hundreds of sub-queries for complex questions. Standard AI search typically generates 8 to 12 sub-queries per user prompt, though this varies significantly by complexity.

Step 3 — Parallel retrieval: All sub-queries are executed simultaneously. The AI retrieves pages that rank for each sub-query, then evaluates which passages from those pages best answer the specific sub-question that triggered their retrieval.

Step 4 — Synthesis: Retrieved passages from multiple sources are combined into a single response. Citations are assigned to the sources that contributed the most directly usable passages. A source that contributes clear, extractable passages to multiple sub-queries is more likely to be cited prominently than one that ranks well for the original query but only answers one narrow facet of it.

Why this breaks traditional SEO strategy

Traditional SEO optimizes pages for one primary keyword. The underlying assumption is that ranking for a term means your page gets shown when that term is searched. Query fan-out invalidates this assumption for AI search.

When an AI processes a query, it does not retrieve the top-ranking page for that query and show it to the user. It generates a set of sub-queries, retrieves content for each, and builds a synthesized answer. A page that ranks number one for "best project management software" may never appear in an AI-generated answer for that query if its content does not clearly address the pricing, integrations, and comparison sub-queries that the AI generates during fan-out retrieval.

This is why only half of pages ranking number one on Google are cited by AI search engines. AI search optimization is not a natural extension of traditional SEO keyword strategy. It requires a different approach: optimizing for topical breadth and sub-query coverage, not just primary keyword ranking.

How query fan-out differs across AI platforms

Platform | Fan-out behavior | SEO implication |

Google AI Mode | Aggressive fan-out using Gemini — can issue hundreds of sub-queries for complex questions via Deep Search | Topical cluster depth matters most — pages covering a topic across multiple interconnected pages are favored |

Google AI Overviews | Lighter fan-out for quick answers to straightforward queries — typically 3 to 8 sub-queries | Featured snippet optimization and FAQ schema increase extraction probability |

Perplexity | Real-time web retrieval with fan-out decomposition — strong recency bias in sub-query results | Fresh content and specific factual passages with citations are prioritized in retrieval |

ChatGPT Search | Decomposes prompts and sends sub-queries to Bing — blends parametric knowledge with retrieved content | High-authority pages covering multiple sub-topic angles perform better than single-angle pages |

Claude | Real-time retrieval via Claude-SearchBot with decomposition-based retrieval | Answer-first structure and author credentials improve sub-query passage extraction |

What content gets cited during fan-out

AI systems do not evaluate your page as a whole during fan-out retrieval. They evaluate individual passages discrete chunks of text that stand alone as answers to a specific sub-query. This changes what good content looks like for AI search.

A passage that leads with a clear answer to a specific question, follows with supporting evidence or data, and stays focused on one point is far more likely to be retrieved and cited during fan-out than a passage that gradually builds toward a point across multiple paragraphs. Including facts and statistics in content can boost visibility in AI responses by up to 25%, because AI systems can anchor specific sub-query answers to verifiable data points within your page.

The practical implication: every H2 and H3 section of your page is a potential citation unit. Each section should be written as a self-contained, directly answerable passage not as part of a narrative that requires the surrounding context to make sense. Content that scatters related information across different sections of a page is much harder for AI to extract during fan-out retrieval than content organized into clean, topically focused sections.

How to optimize for query fan-out

Build topic clusters, not standalone pages

The single most effective optimization for query fan-out is building topic clusters: a central hub page connected to multiple supporting pages, with each page covering a specific subtopic in depth. When an AI generates fan-out sub-queries around a topic, a site with ten interconnected pages covering different angles of that topic has ten chances to be retrieved across the sub-query set. A site with one comprehensive page has one chance.

Topic clusters also signal topical authority, which AI systems use to determine which sources are worth citing consistently. Keyword clustering is the process of identifying which subtopics belong in a cluster. Use it to map your fan-out sub-queries to specific pages before writing your content.

Write every section as a self-contained answer

Structure every H2 and H3 section to open with a direct answer to the question that heading implies, followed by supporting detail. Avoid introductory paragraphs that set context before delivering the point. AI retrieval systems prioritize passages that answer sub-questions immediately and clearly. Sections that take too long to reach the main point are less likely to be extracted, cited, or surfaced in AI-generated responses.

This is the same answer-first writing principle that drives AEO and on-page SEO performance. The difference is the reason: in traditional SEO, it improves featured snippet capture. In fan-out retrieval, it determines whether your passage gets selected as a citation source for one of the AI's sub-queries.

Identify your fan-out sub-queries and cover them explicitly

You can reverse-engineer the sub-queries an AI is likely to generate for your target topic by running the topic through Perplexity or Google AI Mode and reviewing the internal search steps it takes. In Perplexity, click the search steps dropdown to see the actual sub-queries the system ran. In Google AI Mode, watch the search activity panel during a complex query. These are the exact terms you need content for.

Tools like AlsoAsked, AnswerThePublic, and Google's People Also Ask results surface related question patterns that map closely to fan-out sub-queries. Prompt tracking tools can also show you which prompts your brand is already being retrieved for and which sub-query angles you are missing.

Use structured data to label your passages explicitly

FAQ schema, HowTo schema, and Article schema help AI systems map your content to specific question types during fan-out retrieval. A page with FAQ schema on its bottom section is explicitly labeling those Q&A pairs as answer candidates making it easier for the AI to select and cite them when those questions match a fan-out sub-query. The structured data for LLMs guide covers which schema types are most effective for AI retrieval specifically.

A practical example of fan-out in action

The query

User asks ChatGPT: "What is the best email marketing platform for a small e-commerce store that sells handmade goods?"

What the AI does

The system fans out into sub-queries including: "best email marketing software for e-commerce," "email marketing platforms for small businesses," "email marketing tools with Shopify integration," "email marketing pricing comparison," "Klaviyo vs Mailchimp for small stores," and "email automation for handmade product sellers." Each sub-query is searched separately, and the most useful passages from each retrieval are synthesized into a response.

What gets cited

A page that covers only "best email marketing software" in general terms may rank well but gets retrieved for only one sub-query. A site with a pillar page on email marketing for e-commerce plus supporting pages on pricing comparison, Shopify integrations, and automation workflows gets retrieved across five or six sub-queries. It appears in the synthesis as a consistent source earning a citation even if no individual page outranks the competition for the primary keyword.

Common mistakes when optimizing for query fan-out

Mistake | Why it hurts | Fix |

Optimizing for one primary keyword only | AI fan-out retrieves across sub-queries — a single-page strategy misses most retrieval events | Build topic clusters covering multiple sub-query angles with interconnected supporting pages |

Writing narrative content that buries answers | AI extracts passages, not narratives — sections that delay the answer are rarely cited | Open every H2 and H3 with a direct answer before any background or context |

Scattering related information across a page | AI systems chunk content by section — fragmented information across paragraphs is harder to retrieve | Group related facts and answers into dedicated, self-contained sections |

Ignoring question-based sub-topics | Fan-out generates question-style sub-queries — content without question-based headings misses those retrieval events | Phrase key headings as questions; add FAQ sections that map to likely sub-queries |

Measuring AI visibility with rank trackers only | Traditional rank trackers show keyword positions, not sub-query citation rates | Use AI visibility tools and manual prompt testing to measure fan-out coverage |

Conclusion

Query fan-out is the mechanism that explains why traditional SEO rankings are an unreliable predictor of AI search visibility. When one user query becomes eight to twelve parallel sub-queries, the pages that get cited are not necessarily the pages that rank best for the original phrase they are the pages with the clearest, most self-contained passages covering the widest range of subtopics that the AI generates during retrieval.

The optimization strategy that follows is straightforward in principle: build topic clusters that cover your subject area from multiple angles, write every section as a directly answerable, self-contained passage, identify the sub-queries your target topics fan out into, and use structured data to label your content explicitly for AI retrieval systems. These practices serve AI search visibility and traditional SEO simultaneously which is the most efficient possible use of your content investment in 2026.